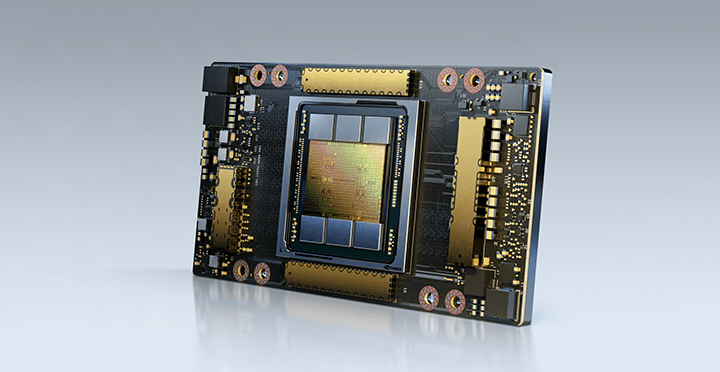

NVIDIA A100 Tensor Core GPU

Unprecedented acceleration at every scale

Enterprise-Ready Software for AI

The NVIDIA EGX™ platform includes optimized software that delivers accelerated computing across the infrastructure. With NVIDIA AI Enterprise, businesses can access an end-to-end, cloud-native suite of AI and data analytics software that’s optimized, certified, and supported by NVIDIA to run on VMware vSphere with NVIDIA-Certified Systems. NVIDIA AI Enterprise includes key enabling technologies from NVIDIA for rapid deployment, management, and scaling of AI workloads in the modern hybrid cloud.

The Most Powerful End-to-End AI and HPC Data Center Platform

A100 is part of the complete NVIDIA data center solution that incorporates building blocks across hardware, networking, software, libraries, and optimized AI models and applications from NGC™. Representing the most powerful end-to-end AI and HPC platform for data centers, it allows researchers to rapidly deliver real-world results and deploy solutions into production at scale.

Deep Learning Training

Up to 3X Higher AI Training on Largest Models

DLRM Training DLRM on HugeCTR framework, precision = FP16 | NVIDIA A100 80GB batch size = 48 | NVIDIA A100 40GB batch size = 32 | NVIDIA V100 32GB batch size = 32.

AI models are exploding in complexity as they take on next-level challenges such as conversational AI. Training them requires massive compute power and scalability.

NVIDIA A100 Tensor Cores with Tensor Float (TF32) provide up to 20X higher performance over the NVIDIA Volta with zero code changes and an additional 2X boost with automatic mixed precision and FP16. When combined with NVIDIA® NVLink®, NVIDIA NVSwitch™, PCI Gen4, NVIDIA® InfiniBand®, and the NVIDIA Magnum IO™ SDK, it’s possible to scale to thousands of A100 GPUs.

A training workload like BERT can be solved at scale in under a minute by 2,048 A100 GPUs, a world record for time to solution.

For the largest models with massive data tables like deep learning recommendation models (DLRM), A100 80GB reaches up to 1.3 TB of unified memory per node and delivers up to a 3X throughput increase over A100 40GB.

NVIDIA’s leadership in MLPerf, setting multiple performance records in the industry-wide benchmark for AI training.

Deep Learning Inference

A100 introduces groundbreaking features to optimize inference workloads. It accelerates a full range of precision, from FP32 to INT4. Multi-Instance GPU (MIG) technology lets multiple networks operate simultaneously on a single A100 for optimal utilization of compute resources. And structural sparsity support delivers up to 2X more performance on top of A100’s other inference performance gains.

On state-of-the-art conversational AI models like BERT, A100 accelerates inference throughput up to 249X over CPUs.

On the most complex models that are batch-size constrained like RNN-T for automatic speech recognition, A100 80GB’s increased memory capacity doubles the size of each MIG and delivers up to 1.25X higher throughput over A100 40GB.

NVIDIA’s market-leading performance was demonstrated in MLPerf Inference. A100 brings 20X more performance to further extend that leadership.

Up to 249X Higher AI Inference Performance Over CPUs BERT-LARGE Inference

BERT-Large Inference | CPU only: Xeon Gold 6240 @ 2.60 GHz, precision = FP32, batch size = 128 | V100: NVIDIA TensorRT™ (TRT) 7.2, precision = INT8, batch size = 256 | A100 40GB and 80GB, batch size = 256, precision = INT8 with sparsity.

Up to 1.25X Higher AI Inference Performance Over A100 40GB,RNN-T Inference: Single Stream

MLPerf 0.7 RNN-T measured with (1/7) MIG slices. Framework: TensorRT 7.2, dataset = LibriSpeech, precision = FP16.

High-Performance Computing

To unlock next-generation discoveries, scientists look to simulations to better understand the world around us.

NVIDIA A100 introduces double precision Tensor Cores to deliver the biggest leap in HPC performance since the introduction of GPUs. Combined with 80GB of the fastest GPU memory, researchers can reduce a 10-hour, double-precision simulation to under four hours on A100. HPC applications can also leverage TF32 to achieve up to 11X higher throughput for single-precision, dense matrix-multiply operations.

For the HPC applications with the largest datasets, A100 80GB’s additional memory delivers up to a 2X throughput increase with Quantum Espresso, a materials simulation. This massive memory and unprecedented memory bandwidth makes the A100 80GB the ideal platform for next-generation workloads.

11X More HPC Performance in Four Years Top HPC Apps

Geometric mean of application speedups vs. P100: Benchmark application: Amber [PME-Cellulose_NVE], Chroma [szscl21_24_128], GROMACS [ADH Dodec], MILC [Apex Medium], NAMD [stmv_nve_cuda], PyTorch (BERT-Large Fine Tuner], Quantum Espresso [AUSURF112-jR]; Random Forest FP32 [make_blobs (160000 x 64 : 10)], TensorFlow [ResNet-50], VASP 6 [Si Huge] | GPU node with dual-socket CPUs with 4x NVIDIA P100, V100, or A100 GPUs.

Up to 1.8X Higher Performance for HPC Applications Quantum Espresso

Quantum Espresso measured using CNT10POR8 dataset, precision = FP64.

High-Performance Data Analytics

2X Faster than A100 40GB on Big Data Analytics Benchmark

Big data analytics benchmark | 30 analytical retail queries, ETL, ML, NLP on 10TB dataset | V100 32GB, RAPIDS/Dask | A100 40GB and A100 80GB, RAPIDS/Dask/BlazingSQL

Data scientists need to be able to analyze, visualize, and turn massive datasets into insights. But scale-out solutions are often bogged down by datasets scattered across multiple servers.

Accelerated servers with A100 provide the needed compute power—along with massive memory, over 2 TB/sec of memory bandwidth, and scalability with NVIDIA® NVLink® and NVSwitch™, —to tackle these workloads. Combined with InfiniBand, NVIDIA Magnum IO™ and the RAPIDS™ suite of open-source libraries, including the RAPIDS Accelerator for Apache Spark for GPU-accelerated data analytics, the NVIDIA data center platform accelerates these huge workloads at unprecedented levels of performance and efficiency.

On a big data analytics benchmark, A100 80GB delivered insights with a 2X increase over A100 40GB, making it ideally suited for emerging workloads with exploding dataset sizes.

Enterprise-Ready Utilization

7X Higher Inference Throughput with Multi-Instance GPU (MIG)

BERT Large Inference

BERT Large Inference | NVIDIA TensorRT™ (TRT) 7.1 | NVIDIA T4 Tensor Core GPU: TRT 7.1, precision = INT8, batch size = 256 | V100: TRT 7.1, precision = FP16, batch size = 256 | A100 with 1 or 7 MIG instances of 1g.5gb: batch size = 94, precision = INT8 with sparsity.

A100 with MIG maximizes the utilization of GPU-accelerated infrastructure. With MIG, an A100 GPU can be partitioned into as many as seven independent instances, giving multiple users access to GPU acceleration. With A100 40GB, each MIG instance can be allocated up to 5GB, and with A100 80GB’s increased memory capacity, that size is doubled to 10GB.

MIG works with Kubernetes, containers, and hypervisor-based server virtualization. MIG lets infrastructure managers offer a right-sized GPU with guaranteed quality of service (QoS) for every job, extending the reach of accelerated computing resources to every user.

Data Center GPUs

NVIDIA A100 for HGX

Ultimate performance for all workloads.

NVIDIA A100 for PCIe

Highest versatility for all workloads.

Specifications

| A100 80GB PCIe | A100 80GB SXM | |||

|---|---|---|---|---|

| FP64 | 9.7 TFLOPS | |||

| FP64 Tensor Core | 19.5 TFLOPS | |||

| FP32 | 19.5 TFLOPS | |||

| Tensor Float 32 (TF32) | 156 TFLOPS | 312 TFLOPS* | |||

| BFLOAT16 Tensor Core | 312 TFLOPS | 624 TFLOPS* | |||

| FP16 Tensor Core | 312 TFLOPS | 624 TFLOPS* | |||

| INT8 Tensor Core | 624 TOPS | 1248 TOPS* | |||

| GPU Memory | 80GB HBM2e | 80GB HBM2e | ||

| GPU Memory Bandwidth | 1,935 GB/s | 2,039 GB/s | ||

| Max Thermal Design Power (TDP) | 300W | 400W *** | ||

| Multi-Instance GPU | Up to 7 MIGs @ 10GB | Up to 7 MIGs @ 10GB | ||

| Form Factor | PCIe Dual-slot air-cooled or single-slot liquid-cooled | SXM | ||

| Interconnect | NVIDIA® NVLink® Bridge for 2 GPUs: 600 GB/s ** PCIe Gen4: 64 GB/s | NVLink: 600 GB/s PCIe Gen4: 64 GB/s | ||

| Server Options | Partner and NVIDIA-Certified Systems™ with 1-8 GPUs | NVIDIA HGX™ A100-Partner and NVIDIA-Certified Systems with 4,8, or 16 GPUs NVIDIA DGX™ A100 with 8 GPUs | ||

* With sparsity

** SXM4 GPUs via HGX A100 server boards; PCIe GPUs via NVLink Bridge for up to two GPUs

*** 400W TDP for standard configuration. HGX A100-80GB custom thermal solution (CTS) SKU can support TDPs up to 500W

Inside the NVIDIA Ampere Architecture

Learn what’s new with the NVIDIA Ampere architecture and its implementation in the NVIDIA A100 GPU.

MacBook

MacBook iPad

iPad Apple Watch

Apple Watch Airpods

Airpods iMac

iMac Studio Display

Studio Display iphone

iphone

Gaming Laptop

Gaming Laptop

Gaming Desktop

Gaming Desktop

DDR5 Desktop

DDR5 Desktop DDR5 Laptop

DDR5 Laptop DDR5 Server

DDR5 Server DDR4 Desktop

DDR4 Desktop DDR4 Laptop

DDR4 Laptop DDR4 Server

DDR4 Server DDR3 Desktop

DDR3 Desktop DDR3 Laptop

DDR3 Laptop DDR3 Server

DDR3 Server

Intel Socket

Intel Socket Intel Z890

Intel Z890 Intel B860

Intel B860 Intel B760

Intel B760 Intel H770

Intel H770 Intel B660

Intel B660 Intel H670

Intel H670 Intel H610

Intel H610 Intel Z690

Intel Z690 Intel H510

Intel H510 Intel Z590

Intel Z590 Intel B560

Intel B560 Intel H470

Intel H470 Intel Z490

Intel Z490 Intel H410

Intel H410 Intel B460

Intel B460 Intel H310

Intel H310 Intel B360

Intel B360 Intel B365

Intel B365 Intel X299

Intel X299 Intel Z390

Intel Z390 Intel Z370

Intel Z370 Intel H370

Intel H370 Intel Z270 H270

Intel Z270 H270 Intel B250

Intel B250 Intel Z170 H170

Intel Z170 H170 Intel H110

Intel H110 Intel H81

Intel H81 Intel B85

Intel B85 Intel H61

Intel H61 Intel B150

Intel B150 AMD Socket

AMD Socket AMD B850

AMD B850 AMD B840

AMD B840 AMD TRX50

AMD TRX50 AMD A620

AMD A620 AMD X870

AMD X870 AMD B650

AMD B650 AMD A520

AMD A520 AMD TRX40

AMD TRX40 AMD B550

AMD B550 AMD X570

AMD X570 AMD X470

AMD X470 AMD B450

AMD B450 AMD X370

AMD X370 AMD A320

AMD A320 AMD B350

AMD B350 AMD X399

AMD X399 AMD A88

AMD A88 AMD A68 A78

AMD A68 A78

Cpu Air Coolers

Cpu Air Coolers CPU Liquid Coolers

CPU Liquid Coolers Fans

Fans

AMD CPUs Desktop

AMD CPUs Desktop AMD Server CPU

AMD Server CPU Intel Server CPU

Intel Server CPU Samsung CPUs

Samsung CPUs Other special CPUs

Other special CPUs

Solid State Drives

Solid State Drives NVMe PCIe M.2

NVMe PCIe M.2 SATA 2.5inch

SATA 2.5inch Hard Disk Drive

Hard Disk Drive Server Hard Drives

Server Hard Drives NAS hard drive

NAS hard drive Monitoring hard drive

Monitoring hard drive Portable Solid State Drives

Portable Solid State Drives Memory Cards

Memory Cards USB Flash Drives

USB Flash Drives

Nvidia GPU

Nvidia GPU RTX 50 series

RTX 50 series RTX 30 series

RTX 30 series GTX 16 series

GTX 16 series GTX 10 series

GTX 10 series RX 9000 series

RX 9000 series RX 7000 series

RX 7000 series RX 6000 series

RX 6000 series RX 5000 series

RX 5000 series RX 500 series

RX 500 series RTX 20 series

RTX 20 series

Rack server

Rack server Blade server

Blade server Tower server

Tower server Storage Server Solutions

Storage Server Solutions Network switch

Network switch

Workstation

Workstation Mobile Workstation

Mobile Workstation

Server motherboard

Server motherboard Workstation Motherboard

Workstation Motherboard

PSP3000索尼原装街机掌机-150x150.jpg) SONY Gaming Console

SONY Gaming Console ASUS Gaming Console

ASUS Gaming ConsoleLegion-Go-游戏掌机手持设备-150x150.jpg) Lenovo Gaming Console

Lenovo Gaming Console One XPlayer

One XPlayer日版-Xbox-Series-X-XSX次世代-150x150.jpg) Microsoft Gaming Console

Microsoft Gaming Console XBOX Gaming Console

XBOX Gaming ConsoleMSI-Claw-A1M-050US-游戏掌机-150x150.jpg) MSI Gaming Console

MSI Gaming Console

Motherboard

Motherboard GTX TITAN

GTX TITAN Computer Cases

Computer Cases